Nvidia’s reported plan to invest about $30 billion into OpenAI as part of a mega‑round that could exceed $100 billion is not just another funding milestone; it is the concentration of enormous systemic risk into a single, still‑unproven AI lab. The problem isn’t that OpenAI “needs more money,” but that its entire model rests on vast, recurring capital injections to sustain a cost base that looks more like a leveraged utility than a software business.

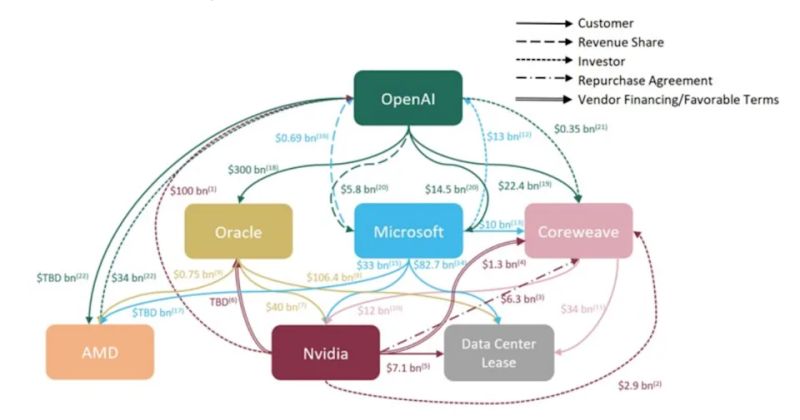

OpenAI’s economics remain deeply fragile: it spends tens of billions on compute, data centers, and staff while still losing large sums annually, and much of any new capital will flow straight back to chip and cloud providers. That creates circular revenue flows, where investors fund a customer so the customer can pay the supplier, inflating apparent demand and valuations without fixing underlying unit economics. Nvidia, already the dominant supplier of AI compute, becomes even more financially intertwined with one of its most capital‑hungry customers, raising the stakes if OpenAI’s model proves unsustainable.

This deal amplifies several systemic risks. First, it deepens the hyper‑concentration of dependencies: governments, banks, and thousands of startups are building on top of one lab whose long‑term solvency and pricing power are unclear. Second, it reinforces a fragile structure in which every extra dollar of revenue requires even more capex, chips, and power, with no guaranteed path to stable margins. Third, it feeds bubble dynamics: capital, chips, and hype chase each other in a loop, while much of the stack remains structurally unprofitable.

Layered on top of this are governance and experience concerns. OpenAI has already gone through public boardroom turmoil and leadership crises, revealing unresolved tensions around control, mission, and commercialization. Yet this same organization is being allowed to become critical infrastructure for the global tech economy. When you combine huge leverage, concentrated dependencies, and a leadership culture that often looks improvisational rather than financially disciplined, you’re effectively betting the ecosystem on a small group’s judgment.

The core risk, therefore, is systemic: if OpenAI later stumbles, reprices sharply, or is forced into painful adjustments once public markets or credit conditions tighten, the shock will not stay contained. It will hit Nvidia, hyperscalers, AI‑heavy indices, and the thousands of companies built on OpenAI’s stack, damaging sentiment around the entire generative AI industry. Instead of forcing a pivot to sustainable unit economics and diversified infrastructure, this kind of mega‑round doubles down on a structure that is already showing its limits.