Two years ago, financial institutions were asking:

“Should we experiment with AI?”

Today the conversation has changed.

“How do we control it?”

Across banks, credit funds, and asset managers, organizations are now developing formal AI policy frameworks to regulate how artificial intelligence is used across their institutions.

The Challenge: AI Without Policy

Generative AI tools introduce new operational risks:

• data leakage

• hallucinated financial outputs

• compliance violations

• lack of auditability

• unverified analysis

For financial institutions, these risks extend beyond technology—they become regulatory issues.

This is why many firms are beginning to treat AI systems similarly to financial models under model risk management frameworks.

What an AI Policy Framework Looks Like

Effective AI governance policies generally include four core layers.

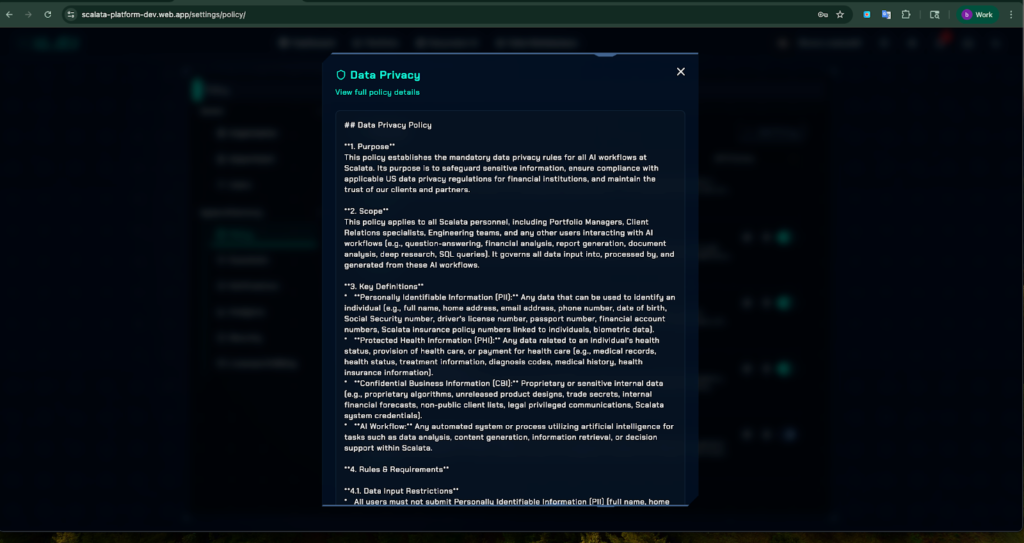

1. Data Access Policies

AI tools must be restricted from ingesting sensitive financial data without proper authorization.

Policies typically define:

- approved datasets

- restricted confidential information

- encryption and storage requirements

Scalata addresses this by allowing institutions to control which data sources AI agents can access and analyze.

2. Use-Case Classification

Not every AI application carries the same risk.

Many financial institutions classify AI usage into:

Low Risk

Research assistance and summarization

Moderate Risk

Internal financial analysis

High Risk

Credit underwriting or trading decisions

Scalata’s structured workflows help organizations standardize how AI is used in these environments.

3. Output Verification

AI outputs should support analysis—not replace decision-making.

Financial teams require:

• human review

• documented reasoning

• traceable sources

Scalata ensures outputs are structured and source-traceable, allowing teams to validate insights quickly.

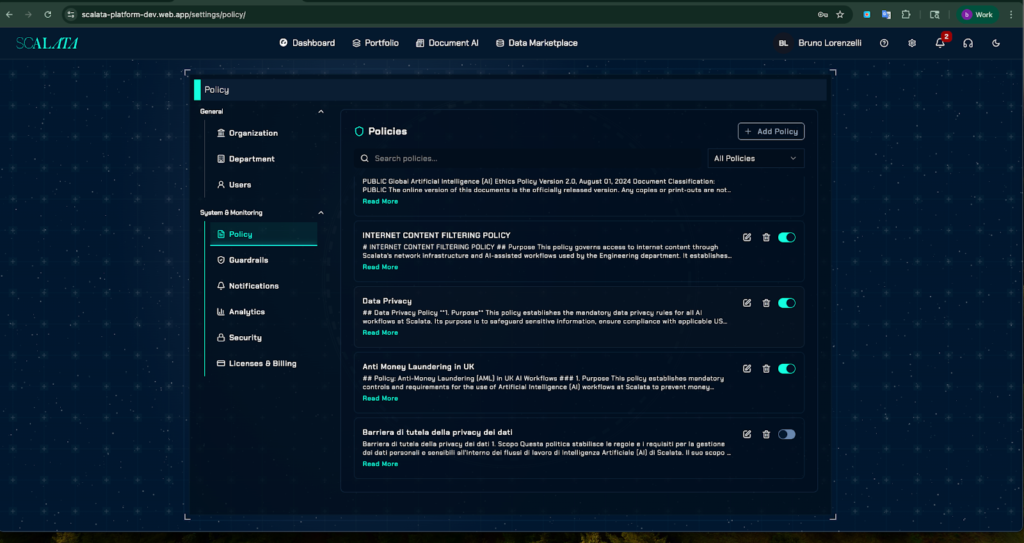

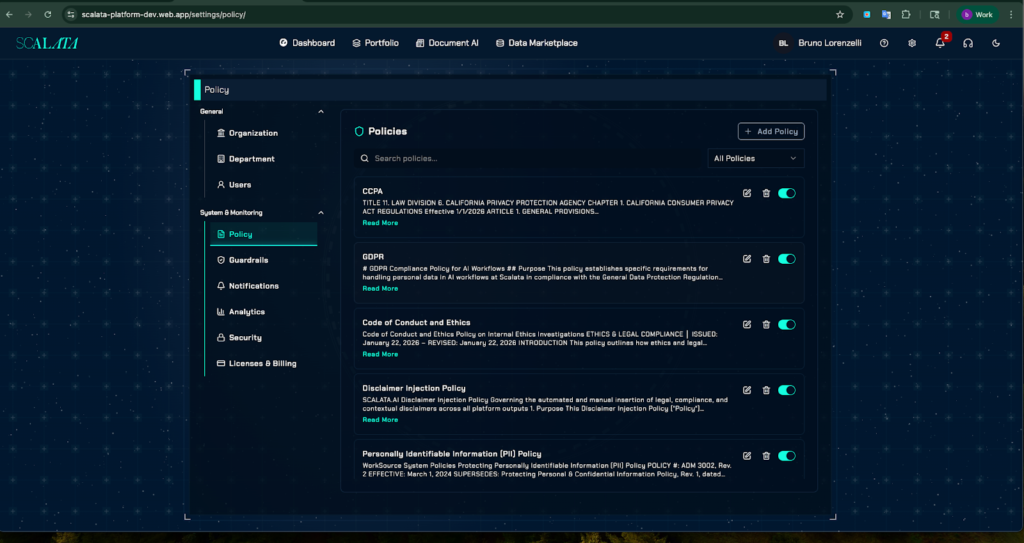

4. Monitoring and Logging

Compliance teams must be able to review how AI systems are used internally.

Platforms must log:

- prompts

- outputs

- sources

- user activity

- Storage and training of checks and misusage

- Alerts

Scalata maintains audit-ready research logs, enabling institutions to maintain oversight.

Real Use Cases Across Financial Institutions

AI policy frameworks are already shaping how financial organizations deploy AI.

Credit Teams

AI can accelerate borrower analysis by summarizing financials and identifying risk signals.

Using structured research agents, Scalata allows credit teams to generate standardized credit insights in minutes rather than hours.

Asset Managers

Investment professionals use AI to analyze markets, earnings reports, and economic signals.

Scalata’s deep research workflows allow analysts to synthesize complex financial data into structured investment insights.

Compliance Teams

Regulatory teams need to track policy changes and assess risk exposure.

AI-driven research workflows can help compliance teams monitor regulatory developments and summarize key updates.

Policy Must Be Supported by Infrastructure

Written policies alone cannot control AI usage.

Financial institutions need platforms that enforce governance through technology.

This includes:

• structured research workflows

• data governance controls

• audit logging

• traceable outputs

Scalata.ai was designed with this policy-driven AI architecture, allowing institutions to scale AI adoption while maintaining compliance oversight.